Last time I left you with some basic optimizations, one being a pseudo-empty space skipping. But as I noted, the volumes needed to be sorted in order for it to work completely. We sort the sub-volumes back to front with respect to distance to the camera. This insures that we have a smooth framerate no matter what angle the camera is at. A speedup we can do here is to only sort the volumes if the camera has moved 45 degrees since we last sorted.

So now our subvolumes are sorted w.r.t. the camera. But we have alpha blending artifacts because depending on the view, the pixels of the subvolumes are not drawn in the correct order. What we can do to fix this is to draw a depth only pass, and ensure that we only draw pixels that will contribute to the final image.

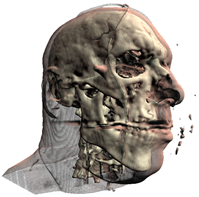

Left: no depth prepass. Right: depth prepass

Translucency

The first sample includes an approximated translucency. It is far from realistic, but it gives fairly good results. The idea is very similar to depth mapping, compare the current pixels depth to that of the depth map, and either use this value to look up into a texture or perform an exponential falloff in the shader (the sample does the latter).

Shadows

There isn’t much to say here. The sample below uses variance shadow mapping.

Well, there it is. Anticlimactic wasn't it?